A brief introduction to Registered Reports

By peer reviewing quality on the methodology, researchers can reduce bias on their data collection, thus increase their chances of getting their registered reports published in over 250 journals.

What are registered reports?

What happens when you put researchers under pressure to get "good results"? Obviously, this is going to play against one of Science ultimate objectives, which is creating high quality research, published regardless the outcome. But for this to become something real, first we need to require making results a dead currency in quality.

In order to make editorial decisions blind to results, we would need to think about what makes hypothesis testing its scientific value, and that is both: the question it asks and the quality of the method it uses, but never the result it produces. And this is exactly what Registered Reports truly seeks, a reduction in publication bias.

Registered Reports is not a new idea:

"What we need is a system for evaluating research based only on the procedures employed. If the procedures are judged appropriate, sensible, and sufficiently rigorous to permit conclusions from the results, the research cannot then be judged inconclusive on the basis of the results and rejected by the referees or editors. Whether the procedures were adequate would be judged independently of the outcome".

-Robert Rosenthal (1966) "Experimenter effects in behavioral research"

How registered reports work?

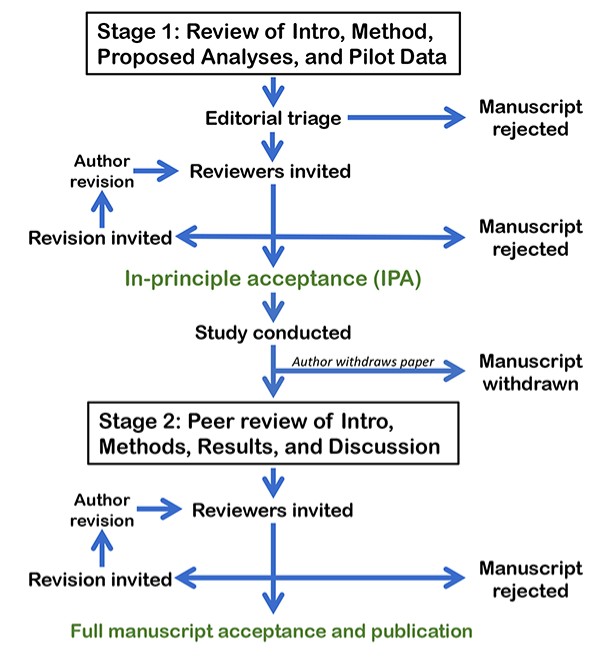

The below diagram shows the different phases a Registered Report has to go through. They are split into two stages, where peer review happens before and after data collection. On average, both stages can take 10 weeks each, with up to two rounds of in‐depth review in each case, according to The European Journal of Neuroscience (Cortex journal).

The process starts when authors submit a Stage 1 manuscript that includes an Introduction, Methods, and the results of any pilot experiments that motivate the research. Next step requires both editors and reviewers assess the protocol, if everything is ok, the manuscript can then be offered in-principle acceptance (IPA), which basically means that if the authors conduct the experiment according with that approved protocol, only then the journal virtually guarantees publication.

After data collection, authors resubmit a Stage 2 manuscript that includes the Introduction and Methods from the original submission plus the Results and Discussion. Authors might be required to share the data on a public and freely accessible archive and to share any data analysis scripts. The final article is published similar to a standard research after this process is completed, giving readers high confidence on the process free of questionable research practices.

Some may argue this pre-specified process could limit procedural flexibility and analytic creativity. The format has clear mechanisms to prevent limit scientific discovery. Deviations in approved procedures are permitted if the authors report the editorial board immediately and after in the final published report. Although authors are required to report results of pre-registered methods, they are free to conduct and report additional unregistered exploratory methods. Outcomes are reported in a separate section of the Results to ensure readers differ between confirmatory and exploratory results.

Why registered reports are important?

First, a clear benefit for authors arises directly from the manuscript rejection rates. For example, Cortex journal rejection rate for accepted Registered Reports is just 10%, while regular submissions is 90%. This is not a result of lowering the bar for publication, but because Stage 1 review process is capable to solve methodological problems in a study before they arise. Due to the design of the study is reviewed before data collection, Registered Reports are more likely to be published within the journal they originally submitted to, avoiding the time cost of resubmitting to multiple journals when manuscripts are rejected. You're investing time upfront but you really get it back at the end.

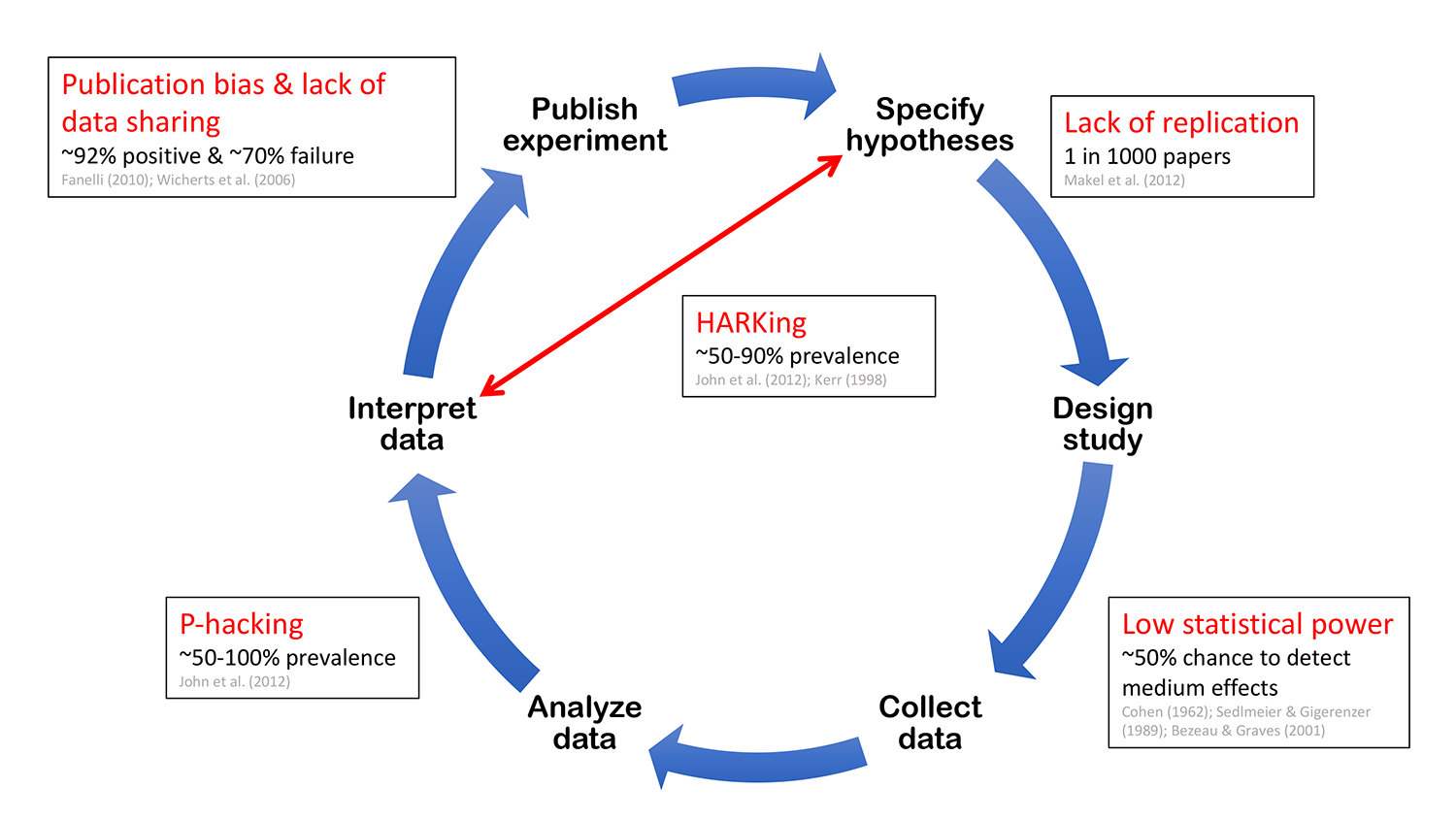

Second, it eliminates a variety of questionable research practices, including low statistical power, selective reporting of results, and publication bias, while allowing complete flexibility to conduct exploratory (unregistered) analyses.

- Lack of replication: the phrase was coined in the early 2010s affecting social sciences and medicine most severely. Basically, it blocks the elimination of false discoveries, foundations on which future studies are built.

- Low statistical power: increases the likelihood that a statistically significant finding represents a false positive result.

- P-hacking: occurs when researchers collect or select data or statistical analyses until nonsignificant results become significant

"When a measure becomes a target, it ceases to be a good measure."

-Goodhart's law

- HARKing: or hypothesizing after results are known. This involves presenting a hypothesis as a priori when it has been generated from the data.

- Publication bias: occurs when journals reject manuscripts on the basis that they report negative or undesirable findings.

- Lack of data sharing: prevents detailed meta-analysis and hinders the detection of data fabrication.

Finally, the format provides an incentive for researchers to conduct important replication studies and resource‐intensive projects. Projects that would otherwise be too risky to attempt if a successful publication relies on the results.

At Orvium we are working hard to offer our users the best quality tools on research publishing and Registered Reports is a really important one which has a lot to do on improving not only your chances to get your work out there but more importantly the way Science works. Go and check our app at https://dapp.orvium.io

References

- Center for Open Science https://www.cos.io/

- Participating Journals https://www.cos.io/our-services/registered-reports

- Registered reports at the European Journal of Neuroscience : consolidating and extending peer‐reviewed study pre‐registration https://onlinelibrary.wiley.com/doi/full/10.1111/ejn.13519